No Code in this post. Here we establish the starting point

Event Driven Programming is a popular way to approach complex systems with a heavy emphasis on breaking applications apart and into smaller, more fundamental pieces. Done correctly, taking an event driven approach can make coding more fun and concise and allow for “specialization” over “generalization”. In doing so, we get closer to the purity of code that does only what it needs to do and nothing more, which should always be our aim as software developers.

In realizing this for cloud applications I have become convinced that, with few exceptions, serverless technologies must be employed as the glue for complex systems. The more they mature, the greater the flexibility they offer for the Architect. In truth, not using serverless can, and should be, viewed in most cases as an anti-pattern. I will note that I am referring explicitly to tooling such as AWS Lamba, Google Cloud Functions, and Azure Functions, I am not speaking to “codeless” solutions such as Azure Logic Apps or similar tools in other platforms – the purpose of such tools is mainly to allow less technical persons to build out solutions. Serverless technologies, such as those mentioned, remain in the domain of the Engineer/Developer.

Very often I find that engineers view serverless functions as more of a “one off” technology, good for that basic endpoint that can run in Consumption. As I have shown before, Azure Functions in particular are very mature and through the use of “bindings” can enable highly sophisticated scenarios without need for writing excessive amounts of boilerplate code. Further, offerings such as Durable Functions in Azure (Step Functions in AWS) enable serverless to go a step further and actually maintain a semblance of state between calls – thus enabling sophisticated multi-part workflows that feature a wide variety of inputters for workflow progression. I wanted to demonstrate this in this series.

Planning Phase

As with any application, planning is crucial and our File Approver application shall be no different. In fact, with event driven applications planning is especially crucial because while Event Driven systems offer a host of advantages they also require certain questions to be answered. Some common questions:

- How can I ensure events get delivered to the components of my system?

- How do I handle a failure in one component but success in another?

- How can I be alerted if events start failing?

- How can I ensure events that are sent during downtime are processed? And in the correct order?

Understandable, I hope, these questions are too big to answer as part of this post but, are questions I hope you, as an architect, are asking your team when you embark on this style of architecture.

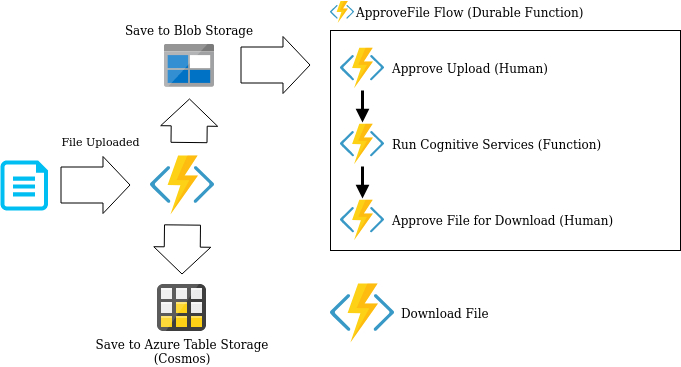

For our application, we will adopt a focus on the “golden path”. That is, the path which assumes everything goes correctly. The following diagram shows our workflow:

Our flow is quite simple and straightforward

- Our user uploads a file to an Azure Function that operates off an HttpTrigger

- After receiving this file, the binary data is written to Azure Blob Storage and a related entry is made in Azure Table Storage

- The creation of the blob triggers Durable Function Orchestration which will manage a workflow that aims to gather data about the file contents and ultimately allow users to download it

- Our Durable workflow contains three steps, two of which will pause our workflow waiting for human actions (done via Http API calls). The other is a “pure function” that is only called as part of this workflow

- Once all steps are complete the file is marked available for download. When requested the Download File function will return the gathered metadata for the file AND the generated SAS Token allowing persons to download the file for a period of 1hr

Of course, we could accomplish this same goal with a traditional approach but, that would leave us to write a far more sophisticated solution than I ended up with. For reference, here is the complete source code: https://github.com/jfarrell-examples/DurableFunctionExample

Azure Function Bindings

Bindings are a crucial components of efficient Azure Function design, at present I am not aware of a similar concept in AWS but, I do not discount its existence. Using bindings we can write FAR LESS code and make our functions easier to understand with more focus on the actual task instead of logic for connecting and reading from various data source. In addition, the triggers tie very nicely into the whole Event Driven paradigm. You can find a complete list of ALL triggers here:

https://docs.microsoft.com/en-us/azure/azure-functions/functions-bindings-storage-blob – Note: this is a direct link to the Blob storage triggers, see the left hand side for a complete list.

Throughout my code sample you will see references to bindings for Durable Functions, Blobs, Azure Table Storage, and Http. Understanding these bindings is, as I said, crucial to your sanity when developing Azure Functions.

Visual Studio Code with Azure Function Tools

I recommend Visual Studio Code when developing any modern application since its lighter and the extensions give you a tremendous amount of flexibility. This is not to say you cannot use Visual Studio, the same tools and support exist, I just find Visual Studio Code (with the right extensions) to be the superior product, YMMV.

Once you have Visual Studio Code you will want to install two separate things:

- Azure Functions Extension VSCode

- Azure Function Core Tools (here)

I really cannot say enough good things about Azure Function Core Tools. It has come a long way from version 1.x and the recent versions are superb. In fact, I was able to complete my ENTIRE example without ever deploying to Visual Studio, using breakpoints all along the way.

The extension for Visual Studio Code is also very helpful for both creating and deploying Azure Functions. Unlike traditional .NET Core applications, I do not recommend using the command line to create the project. Instead, open Visual Studio Code and access your Azure Tools. If you have the Functions extension installed, you will see a dedicated blade – expand it.

The first icon (looks like a folder) enables you to create a Function project through Code. I recommend this approach since it gets you started very easily. I have not ruled out the existence of templates that could be downloaded and use through dotnet new but this works well enough.

Keep in mind that a Function project is 1:1 with a Function app so, you will want to target an existing directory if you play to have more than one in your solution. Note that this is likely completely different in Visual Studio, I do not have any advice for that approach.

When you go through the creation process you will be asked to create a function. For now, you can create whatever you like, I will be diving into our first function in Part 2, as you create subsequent functions use the lightning icon next to the folder. Doing this is not required, it is perfectly acceptable to build your functions up but, using this gets the VSCode settings correct to enable debugging with the Core Tools so, I highly recommend it.

The arrow (third icon) is for deploying. Of course, we should never use this outside of testing since we would like a CI/CD process to test and deploy code efficiently – we wont be covering CI/CD for Azure Functions in this series but, we will certainly in a future series.

Conclusion

Ok so, now we understand a little about what Durable Functions are and how they play a role in Event Driven Programming. I also walked through the tools that are used when developing Azure Functions and how to use them.

Moving forward into Part 2, we will construct our File Upload portion of the pipeline and show how it starts our Durable Function workflow.

Once again the code is available here:

https://github.com/jfarrell-examples/DurableFunctionExample

5 thoughts on “Durable Functions: Part 1 – The Intro”