This post is in conjunction with the first post I made (here) about building a Cloud Function in Google Cloud to accept an image and put it in my blob storage. This is a pretty common scenario for handling file uploads on the web when using Cloud. But this by itself is not overly useful.

I always like to use this simple example to explore the abilities for deferred processing of a platform by using a trigger to perform Computer Vision processing on the image. It is a very common pattern for deferred processing.

Add the Database

For any application like this we need a persistent storage mechanism because our processing should not be happening in real time so, we need to be able to store a reference to the file and update it once processing finishes.

Generally when I do this example I like to use a NoSQL database since it fits the processing model very well. However, I decided to deviate from my standard approach an opt for a managed MySQL instance through Google.

This is all relatively simple. Just make sure you are using MySQL and not PG, its not clear to me that, as of this writing, you can easily connect to PG from a GCF (Google Cloud Function).

The actual provisioning step can take a bit of time, so just keep that in mind.

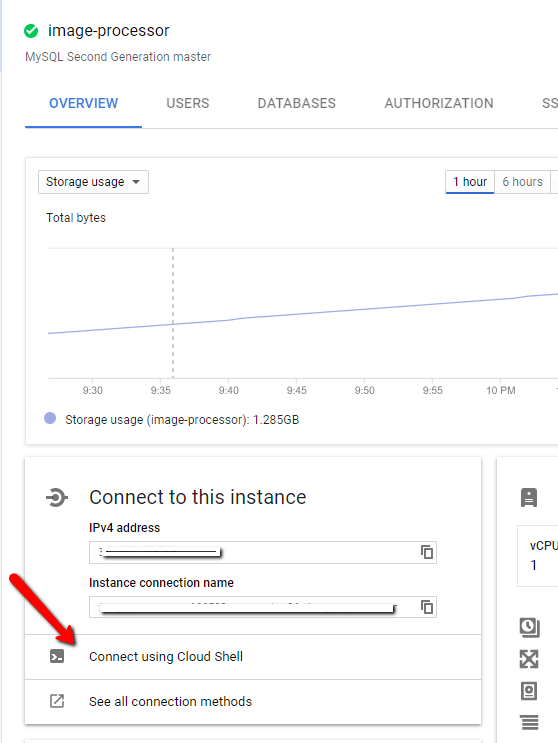

At this time, there does not appear to be any sort of GUI interface for managing the MySQL instance, so you will need to remember your SQL and drop into the Google Cloud Shell.

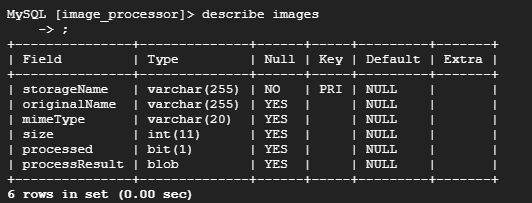

Once you are here you can create your database. You will want a table within that database to store the image references. Your schema may very, here is what I choose:

The storageName is unique since I am assigning it during upload so that the name in the bucket matches the unique name of the image in both spots; allows me to do lookups. The rest of the values are designed to support a UI.

Inserting the record

You will want to update your upload trigger to insert the record into this table as the file comes across the wire. I wont show that code here, you can look at the backend trigger to see how to connect to the MySQL database. Once you have that figured out, it is a matter of running the INSERT statement.

Building the Backend Trigger

One of the big draws of Serverless is the integration abilities it gives you to integrate with the platform itself. By their nature, Cloud Platforms can easily produce events for various things happening within itself. Serverless functions are a great way to listen for these events through the notion of a trigger.

In our case, Google will raise an event when new blobs are created (or modified), among other actions. So, we can configure our trigger to fire on this event. Because Serverless scales automatically with load, this is an ideal way to handle this sort of deferred processing model without having to write our own plumbing.

Google makes this quite easy as when you elect to create a Cloud Function you will be asked what sort of trigger you want to respond to. For our first part, the upload function, we are listening to an Http Event (a POST specifically). In this case, we will want to listen to a particular bucket for when new items are finalized (that is Google’s term for created or updated).

You can see, we also have the ability to listen to a Pub/Sub topic as well. This gives you an idea of how powerful these triggers can be as normally you would have to write the polling service to listen for events; this does it for you automatically.

The Code

So, we need to connect to MySQL. Oddly enough, at the time of this writing, this was not officially supported, due to GCF still being in beta from what I am told. I found a good friend which discussed this problem and offered some solutions here.

To summarize the link, we can establish a connection but that appears to be some question about the scalability of the connection. Personally, this doesnt seem to be something that Google should leave to a third party library but should offer a Google Cloud specific mechanism for hitting MySQL in their platform. We shall see if they offer this once GCF GAs.

For now, you will want to run npm install –save mysql. Here is the code to make the connection:

(This is NOT the trigger code)

You can get the socketPath value from here:

While you can use root to connect, I would advise creating an additional user.

From there its a simple matter of calling update. The event which fires to trigger the method includes what the name of the new/updated blobs is, so we pass it into our method call it imageBucketName.

Getting Our Image Data

So we are kind of going out of order since the update above only makes sense if you have data to update with, we dont or not yet. What we want to do is use Google Vision API to analyze the image and return a JSON block representing various features of the image.

To start, you will want to navigate to the Vision API in the Cloud Console, you need to make sure the API is enabled for your project. Pretty hard to miss this since they pop a dialog up to enable it when you first enter.

Use npm install –save @google-cloud/vision to get the necessary library for talking to the Vision API from your GCF.

Ok, I am not going to sugarcoat this, Google has quite a bit of work to do on the documentation for the API, it is hard to understand what is there and how to access it. Initially I was going to use a Promise.all to fire off calls to all of the annotators. However, after examining the response of the Annotate Labels call I realized that it was designed with the idea of batching the calls in mind. This led to a great hunt to how to do this. I was able to solve it, but I literally had to splunk the NPM package to figure out how to tell it what APIs I wanted it to call, this is what I ended up with:

The weird part here is the docs seemed to suggest that I dont need the hardcoded strings, but I couldnt figure out how to reference it through the API. So, still have to work that out.

The updateInDatabase method is the call I discussed above. The call to the Vision API ends up generating a large block of JSON that I drop into a blob column in the MySQL table. This is a big reason I generally go with a NoSQL database since these sort of JSON responses are easier to work with than they are with a Relation Database like MySQL.

Summary

Here is a diagram of what we have built in the test tutorials:

We can see that when the user submits an image, that image is immediately stored in blob storage. Once that is complete we insert the new record in MySQL while at the same time the trigger can fire to start the Storage Trigger. Reason I opted for this approach is I dont want images in MySQL that dont exist in storage since this is where the query result for the user’s image list will come from and I dont want blanks. There is a potential risk that we could return from Vision API before the record is created but, that is VERY unlikely just due to speed and processing.

The Storage Trigger Cloud Function takes the image, runs it against the Vision API and then updates the record in the database. All pretty standard.

Thoughts

So, in the previous entry I talked about how I tried to use the emulator to develop locally, I didnt here. The emulator just doesnt feel far enough along to be overly useful for a scenario like this. Instead I used the streaming logs feature for Cloud Functions and copy and pasted by code in via the Inline Editor. I would then run the function, with console.log and address any errors. It was time consuming and inefficient but, ultimately, I got through it. It would terrify me for a more complex project though.

Interestingly, I had assumed that Google’s Vision API would be better than Azure and AWS; it wasnt. I have a standard test for racy images that I run and it felt the picture of my wife was more racy than the bikini model pic I use. Not sure if the algorithms have not been trained that well due to lack of use but I was very surprised that Azure is still, by far, the best and Google comes in dead last.

The once nice thing I found in the GCP Vision platform is the ability to find occurrences of the image on the web. You can give it an image and find out if its on the public web somewhere else. I see this being valuable to enforce copyright.

But my general feeling is Google lacks maturity compared to Azure and AWS; a friend of mine even called it “the Windows Phone of the Cloud platforms” which is kind of true. You would think Google would’ve been first being they more or less pioneered horizontal computing which is the basis for Cloud Computing and their ML/AI would be top notch as that is what they are more less known for. It was quite surprising to go through this exercise.

Ultimately the big question is, can Google survive in a space so heavily dominated by AWS? Azure has carved out a nice chunk but really, the space belongs to Amazon. It will be interesting to see if Google keep’s their attention and tries to carve out a nice niche or ends up abandoning the public cloud offering. We shall see.